Growth · Part of Quality Assurance

Calibration sessions + blind scoring

Calibration sessions with multiple evaluators scoring the same call blind, then revealing and discussing variance. The platform records the variance and the resolution — calibration becomes data, not folklore.

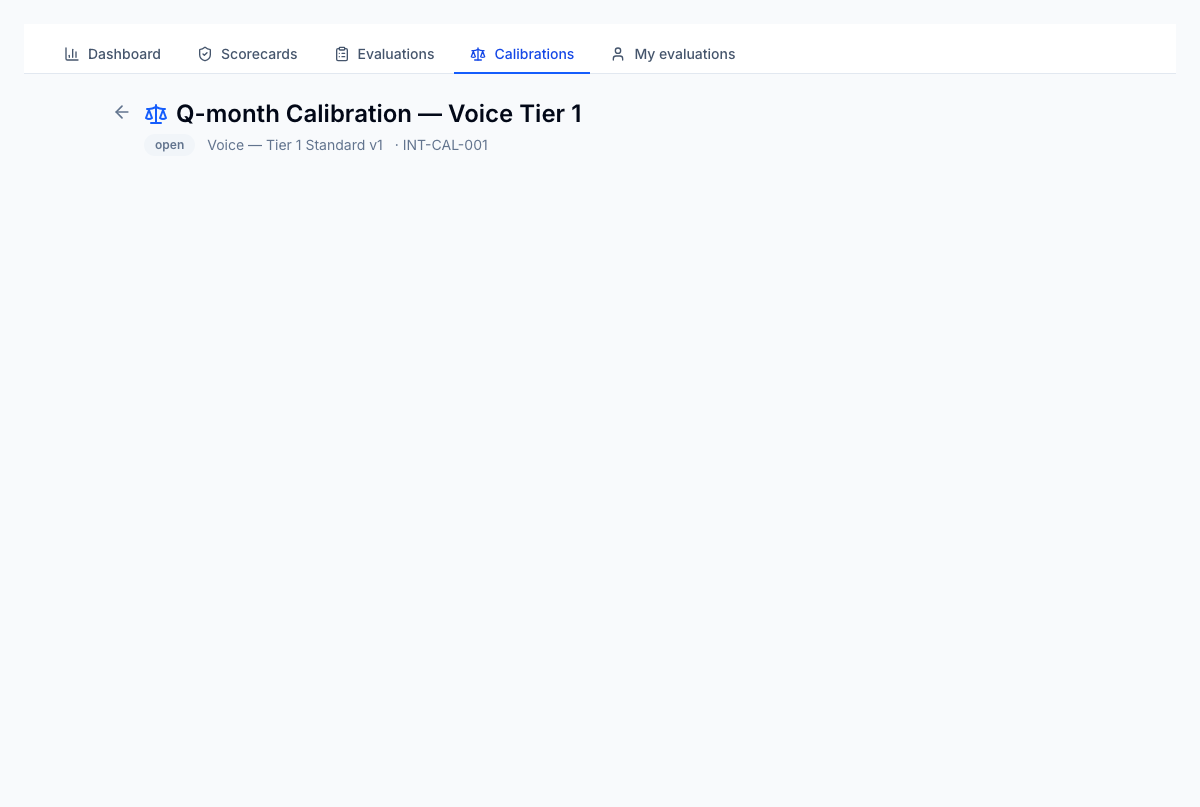

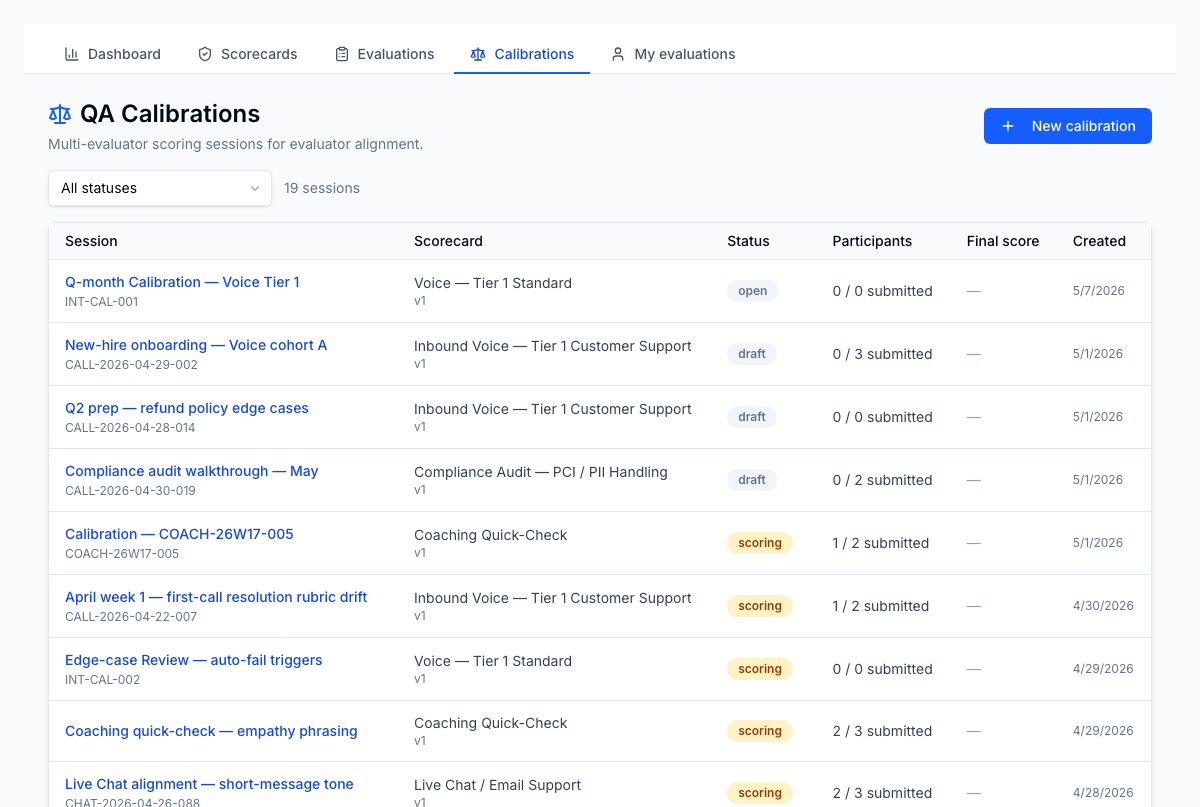

Calibration sessions — every multi-evaluator session with scorecard, participant count, status (draft/scoring/resolved), and final score when concluded.

1 / 2

For the operator

Calibration sessions where multiple evaluators score the same call blind, then reveal and discuss variance in a structured session view. The platform records the variance and the resolution as data — calibration becomes an analyzable dataset rather than a meeting people remember selectively. Drift across analysts surfaces in trend reports rather than being noticed three months too late.

Business impact

Inter-rater reliability is what makes QA scores defensible to clients and to agents — when reliability erodes, every QA-driven decision (coaching, performance management, terminations) becomes contestable. Calibration-as-data closes the reliability gap and produces the inter-rater stats that defensible QA programs rely on. Cleaner scores; defensible decisions; fewer escalations.